At a Glance

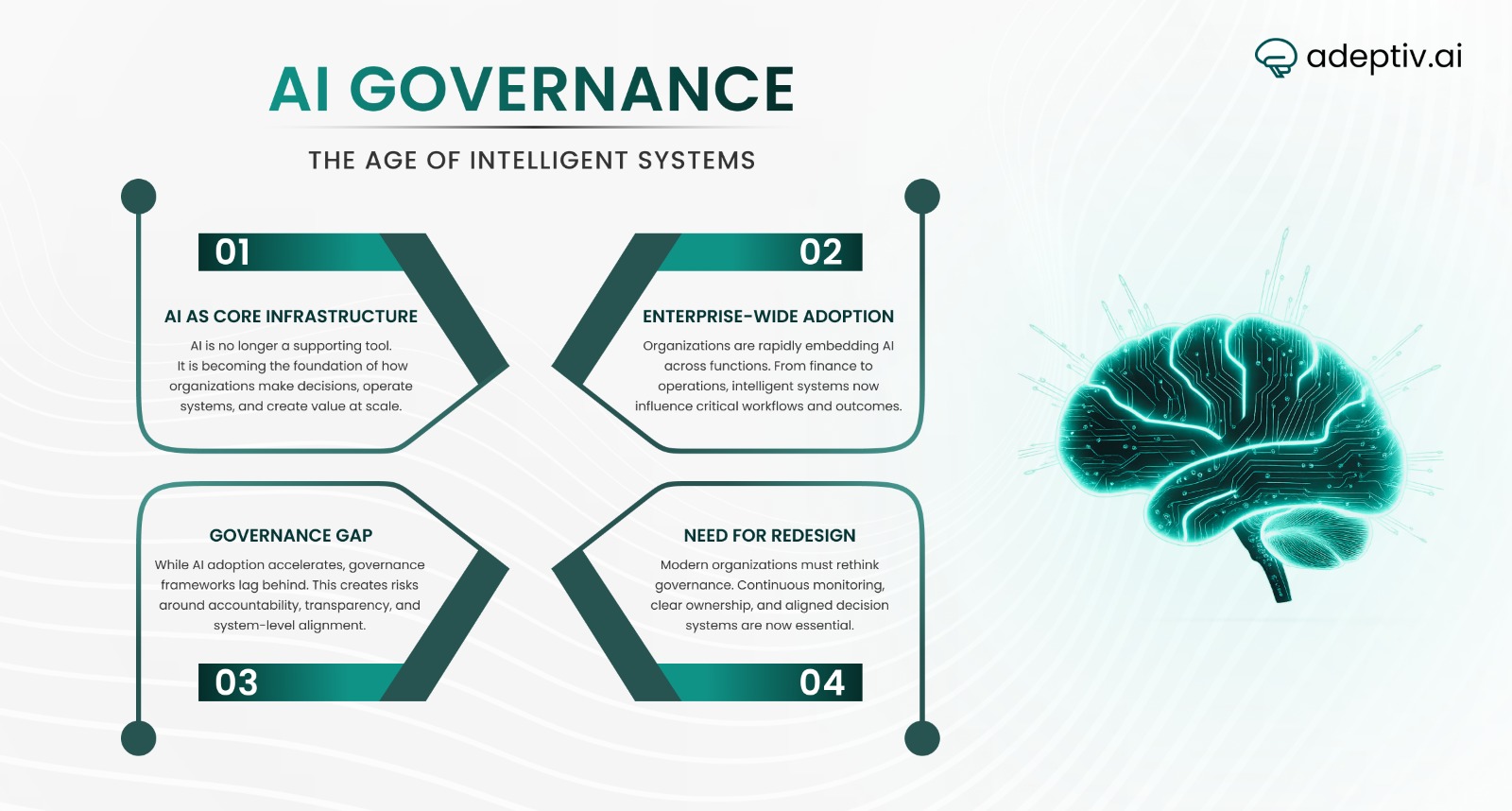

- The institutionalization of artificial intelligence is rapidly shifting AI from a technical tool to a core decision infrastructure within organizations

- Research from MIT Sloan School of Management and Stanford Institute for Human-Centered AI shows AI is now deeply embedded in operational systems

- Most enterprises have adopted AI across multiple business functions, reaching unprecedented scale

- As AI increasingly drives decisions in finance, healthcare, hiring, and logistics, traditional governance models are becoming obsolete

- Institutions like OECD AI Policy Observatory, Partnership on AI, and AI Now Institute highlight the growing need for accountability and transparency

- Organizations must redesign governance to manage intelligent systems that continuously evolve and shape outcomes

Introduction: Artificial Intelligence as Institutional Infrastructure

Most discussions around artificial intelligence still frame it as a tool — something that drives efficiency, automates tasks, or enhances innovation.

That framing is no longer sufficient.

A deeper shift is underway: AI is moving from the edges of organizations into the core, where it increasingly shapes decisions, not just processes. Decisions once owned by human specialists — in financial risk, medical diagnosis, hiring, and logistics — are now being informed, and in some cases determined, by AI systems embedded in daily operations.

Data reinforces the scale of this shift. Research from Stanford Institute for Human-Centered AI shows that nearly 78% of organizations have adopted AI in some capacity, with over 70% integrating generative AI into workflows such as marketing, analytics, and customer service.

But adoption is not the story. Institutionalization is.

AI is no longer experimental. It is becoming part of the decision-making infrastructure of modern enterprises. And as these systems expand across functions, they begin to influence not just isolated outcomes, but entire operational and strategic directions.

This creates a fundamental challenge:

Organizations are scaling AI systems faster than they are evolving the governance required to control them.

Traditional governance models — designed for static, predictable software — are not built for systems that learn, adapt, and evolve over time. As AI becomes embedded in how institutions function, governance can no longer be treated as a technical afterthought.

It must be redesigned as a core organizational capability.

MIT Insight Box 1: Enterprise AI Adoption at Scale

Key Data Points

- 78% of organizations use AI in at least one business function.

- More than 70% of enterprises report deploying generative AI tools.

- AI-powered systems are now widely used across marketing, finance, operations, and risk management.

These trends illustrate that AI adoption has reached a level where governance must operate at the enterprise and institutional level, not merely at the project level.

From Experimental Technology to Organizational Capability

Artificial intelligence has moved through a clear evolution — from research labs to real-world deployment — but the most important shift is happening now.

AI is no longer a standalone capability. It is becoming an organizational system.

Today, AI is embedded across critical business functions — from fraud detection and customer analytics to predictive maintenance and global supply chain optimization. But more importantly, these systems are no longer operating in isolation. They are increasingly interconnected, influencing multiple workflows, datasets, and decision points at once.

This is where most organizations get it wrong.

They continue to treat AI as a series of discrete technical projects, rather than as a coordinated decision layer across the enterprise. Research from MIT Sloan School of Management consistently shows that fragmented AI initiatives fail to scale because they lack integration across data, processes, and governance.

The result is not transformation — it’s fragmentation.

Scaling AI is not about deploying more models. It’s about building a coherent system of decision-making.

Institutionalizing AI, therefore, requires more than technical implementation. It demands a fundamental redesign of:

- Operating models

- Risk management systems

- Governance structures

Until organizations make that shift, AI will remain powerful — but underutilized.

The Alignment Challenge in Enterprise AI

One of the biggest barriers to scaling AI is not technology — it’s alignment.

Most organizations deploy AI across functions, but fail to ensure those systems are aligned with business strategy, governance, and each other. Research from the MIT Sloan School of Management highlights that without this alignment, AI initiatives struggle to deliver meaningful impact.

Alignment here is not just technical — it is organizational.

AI systems:

- Rely on evolving data

- Adapt over time

- Operate across multiple departments

Without coordination, they create fragmented decision environments instead of unified intelligence.

For example, a credit risk model may use different assumptions than a fraud detection or customer analytics system. Individually, each model may perform well. But together, they can produce conflicting outputs, unclear accountability, and increased regulatory exposure.

The risk is not that AI systems fail — it’s that they succeed in isolation but fail as a system.

This is the hidden challenge of enterprise AI.

Without unified oversight, organizations don’t scale intelligence — they scale inconsistency.

MIT Insight Box 2: The Enterprise AI Value Gap

Despite widespread adoption of AI technologies, only a small percentage of organizations achieve meaningful business impact.

Research observations:

- Nearly 78% of enterprises report using AI technologies.

- However, only about 5% of companies successfully scale AI to deliver measurable enterprise value.

This gap highlights a central challenge: AI technology alone does not create transformation.

Organizations must redesign governance, operating models, and accountability systems to fully realize AI’s potential.

AI as a Socio-Technical System

AI must be viewed as a socio-technical system rather than just a technology artifact, according to a significant finding from recent research. AI technology interacts with social structures, organizational hierarchies, and regulatory settings, according to organizations like the AI Now Institute.

AI systems have an impact on choices that may have an impact on public safety, healthcare outcomes, financial access, and career prospects. As a result, governance systems need to address issues of fairness, accountability, and transparency in addition to technical dependability.

Collaborative governance approaches that unite policymakers, researchers, and business executives have been promoted by groups like the Partnership on AI. These models, which acknowledge that ethical, legal, and societal viewpoints must be included in responsible governance, place an emphasis on interdisciplinary approaches to AI monitoring.

The Global Governance Landscape

The institutionalization of AI has also attracted increasing attention from governments and international organizations. Platforms such as the OECD AI Policy Observatory provide global frameworks designed to guide the responsible development and deployment of AI technologies.

At the same time, research institutions and policy organizations are exploring mechanisms to ensure transparency and accountability within automated systems. These initiatives reflect a broader recognition that AI governance cannot be addressed solely within individual organizations.

Instead, governance must operate across multiple layers:

- Institutional governance within organizations

- Regulatory frameworks at the national level

- International coordination through policy institutions.

MIT Insight Box 3: The Emerging AI Governance Gap

While AI adoption is expanding rapidly, governance capabilities have not kept pace.

Recent research highlights several concerning trends:

- 89% of organizations report using AI tools in daily operations.

- Yet only 12% of enterprises report having full visibility into AI usage across departments.

- Approximately 73% of employees use AI tools without formal IT approval, creating what researchers describe as shadow AI.

These statistics illustrate why enterprise governance frameworks must evolve to address the risks associated with decentralized AI adoption.

Why Traditional Governance Models Are No Longer Sufficient

Most organizations are trying to govern AI using frameworks built for traditional software.

That approach is fundamentally flawed.

Traditional IT governance assumes systems that are:

- Predictable

- Stable

- Fully testable before deployment

AI systems break all of these assumptions.

They:

- Continuously learn from new data

- Evolve beyond their original training behavior

- Interact with other systems in dynamic, often unpredictable ways

This creates a new reality:

AI systems cannot be governed through static controls — they require continuous oversight.

Yet many organizations still rely on outdated governance models designed for one-time validation and periodic review. In an AI-driven environment, that’s not enough.

Effective governance must shift toward:

- Continuous monitoring of model performance

- Ongoing validation and recalibration

- Real-time visibility into how decisions are being made

Without this shift, risk compounds quickly. As AI adoption scales — often without centralized oversight — organizations face growing exposure to security, compliance, and operational failures.

Industry forecasts already point to this trajectory, with a significant share of enterprises expected to face AI-related incidents due to unmanaged or poorly governed systems.

The issue is not just technical complexity.

It’s the mismatch between how AI systems behave — and how organizations are trying to control them.

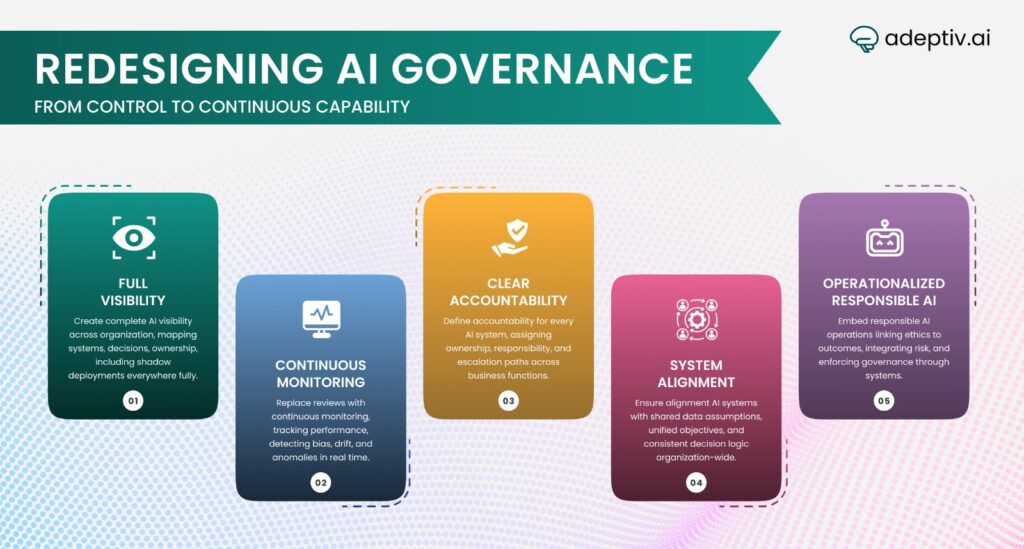

Redesigning Governance for Intelligent Systems

If governance is the bottleneck, then incremental fixes won’t work. Organizations need a fundamental redesign — one built for systems that learn, evolve, and operate at scale.

This is not about adding more controls. It’s about building governance as a capability.

1. Build Full Visibility — Not Partial Awareness

Most organizations don’t actually know where AI is being used.

That’s the first failure.

Leaders need a complete inventory of AI systems:

- Where they are deployed

- What decisions do they influence

- Who owns them

This must include shadow AI — not just officially approved systems.

You cannot govern what you cannot see.

2. Shift from Periodic Review to Continuous Monitoring

AI systems change over time. Governance must reflect that.

Instead of one-time validation, organizations need:

- Continuous performance tracking

- Bias and drift detection

- Real-time alerts for anomalies

Governance is no longer a checkpoint. It’s an ongoing process.

3. Make Decision Accountability Explicit

One of the biggest risks in AI adoption is diffused responsibility.

When something goes wrong, the answer cannot be:

“The model made the decision.”

Organizations must clearly define:

- Who owns each AI system

- Who is accountable for outcomes

- How decisions are reviewed and escalated

Accountability must sit across the business, not just technical teams.

4. Align Systems — Not Just Models

Most governance efforts focus on individual models.

That’s not enough.

The real challenge is ensuring that multiple AI systems work together coherently:

- Shared data assumptions

- Aligned objectives

- Consistent decision logic

Without this, organizations don’t build intelligence — they build fragmentation.

5. Operationalize Responsible AI (Not Just Document It)

Principles alone don’t govern systems.

Frameworks from institutions such as the OECD AI Policy Observatory are valuable — but only if they are embedded in operations.

That means:

- Ethics tied to measurable outcomes

- Risk integrated into workflows

- Governance enforced through systems, not guidelines

Responsible AI is not a policy. It’s an operating model.

The Bottom Line

Redesigning governance is not optional.

As AI becomes institutional infrastructure, governance becomes the mechanism that determines whether it creates value or risk.

And the organizations that get this right won’t just use AI better.

They’ll operate differently.

CEO Perspective: Governing the Institutions Built on AI

For CEOs, this is not a technology shift — it’s an institutional one.

AI is no longer just improving workflows. It is reshaping how decisions are made, how risks are managed, and how value is created across the organization. As these systems become embedded in core operations, governance can no longer sit within IT or compliance functions.

It becomes a leadership responsibility.

The critical mistake many executives make is focusing on where to deploy AI, rather than how AI-driven decisions are governed. That’s the gap.

The real question is not “How fast can we adopt AI?”

It’s “How well can we control and align the decisions AI is already making?”

This requires a shift in leadership priorities:

- From deployment speed → to decision quality

- From technical performance → to system-level accountability

- From compliance mindset → to strategic governance

Organizations that make this shift early will have a clear advantage. Not because they use more AI, but because they use it with control, transparency, and alignment.

Those that don’t will face the opposite: fragmented systems, rising risk, and eroding trust.

In the next phase of competition, access to AI will not be the differentiator. Most organizations will have similar tools and capabilities.

The difference will come down to one thing:

Which organizations can build the most reliable, aligned, and governable decision systems?

That’s what will define leadership in an AI-driven economy.

FAQs

1. What does the institutionalization of AI mean?

The institutionalization of AI refers to the process by which artificial intelligence becomes embedded within organizational decision-making systems, operational infrastructure, and governance structures.

2. Why do organizations need new governance frameworks for AI?

AI systems are dynamic and continuously evolving, unlike traditional software systems. Governance frameworks must therefore include mechanisms for monitoring, accountability, and transparency.

3. What risks arise from unmanaged AI adoption?

Risks include algorithmic bias, regulatory violations, security vulnerabilities, and a lack of accountability for automated decisions.

4. How does AI affect organizational decision-making?

AI systems increasingly influence decisions across finance, healthcare, logistics, hiring, and risk management, making governance essential for responsible deployment.

5. What are the core components of effective AI governance?

Effective governance typically includes AI system inventories, monitoring frameworks, accountability structures, compliance alignment, and responsible AI policies.