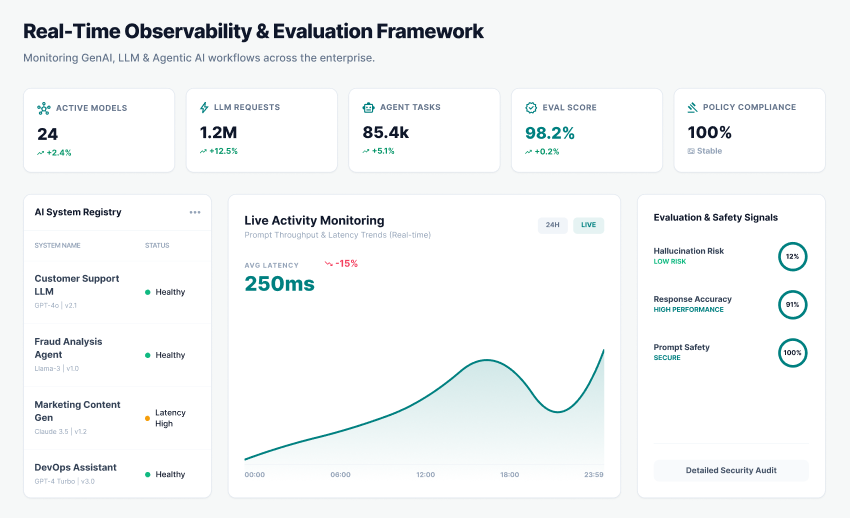

Real-Time Observability & Evaluation Framework

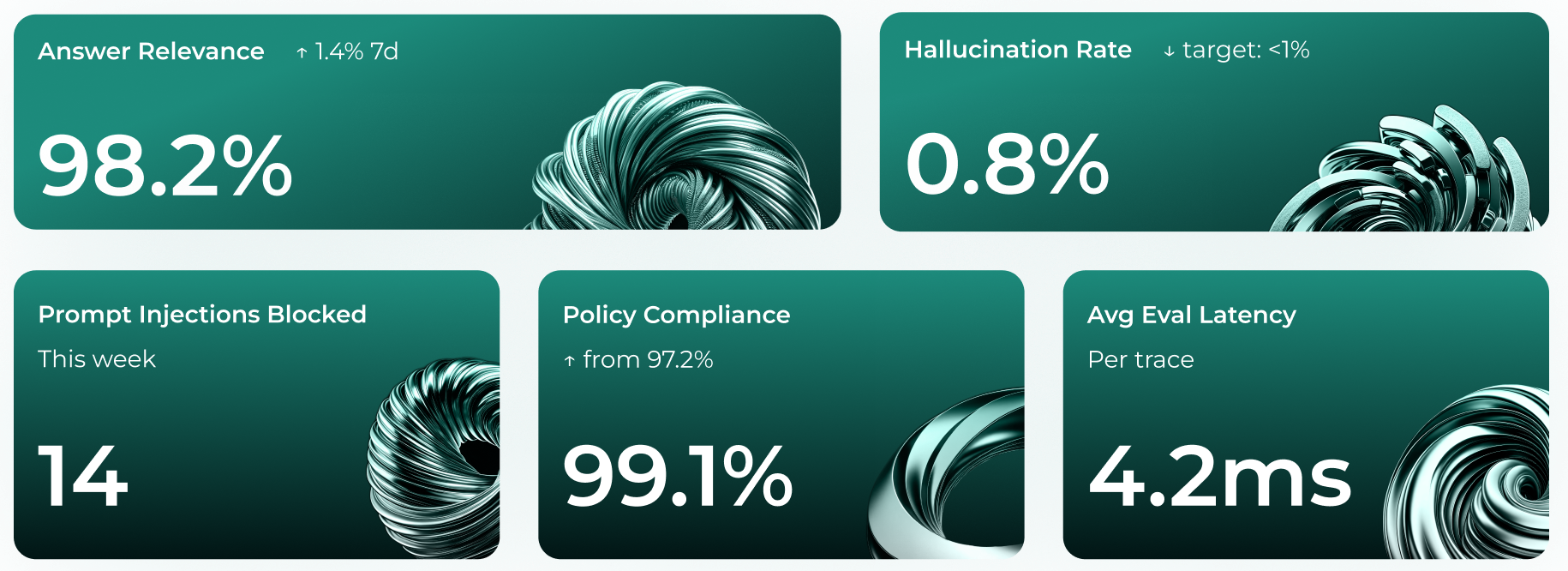

Adeptiv AI’s observability framework is built on a telemetry-first architecture, capture structured trace data in real time, streaming it to the evaluation pipeline, and scoring every interaction against 20+ governance metrics.

The Production Gap: Why Deployed AI Is Ungoverned AI

Governance teams invest significant effort assessing AI risks before deployment. Then the AI goes live — and governance effectively stops. Production is where risk actually materializes: where models hallucinate under real user pressure, where prompt injections are attempted by adversarial users, where PII surfaces in outputs that were clean in testing, and where model behavior drifts silently as usage patterns evolve. Traditional monitoring tools track uptime, latency, and error rates — none of which tell you whether the AI said something harmful, fabricated, biased, or legally indefensible.

Silent Failure Mode

An LLM can return HTTP 200 with sub-100ms latency and zero infrastructure errors while simultaneously fabricating regulatory citations, leaking PII, or producing discriminatory content. Traditional APM tools see a healthy system. Governance teams see nothing until a user complaint, legal notice, or regulatory inquiry surfaces the failure.

Non-Determinism at Scale

LLMs are probabilistic systems. The same prompt can produce meaningfully different outputs across requests, model versions, or context shifts. Behavior observed in pre-production testing does not predict behavior under production traffic volume, edge-case user inputs, or adversarial prompting patterns.

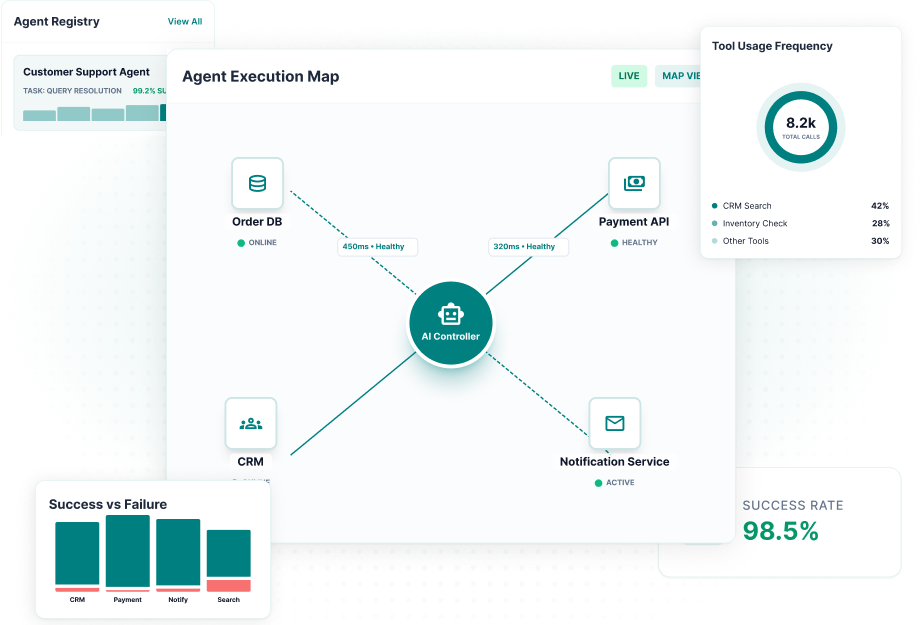

Agentic Complexity

Multi-agent and agentic AI systems — where models call tools, trigger actions, and interact with other models — create execution paths that are exponentially harder to observe. A single user request may spawn dozens of sub-operations across retrieval, reasoning, and action layers, each capable of producing risk that propagates through the chain.

Compliance Without Evidence

AI governance frameworks — EU AI Act Article 72, ISO/IEC 42001 Clause 9, and NIST AI RMF MEASURE — require demonstrable, ongoing evidence of model behavior, not point-in-time assurances. Organizations that cannot produce production logs, evaluation scores, and incident records for their AI systems cannot demonstrate compliance, only claim it.

How It Works

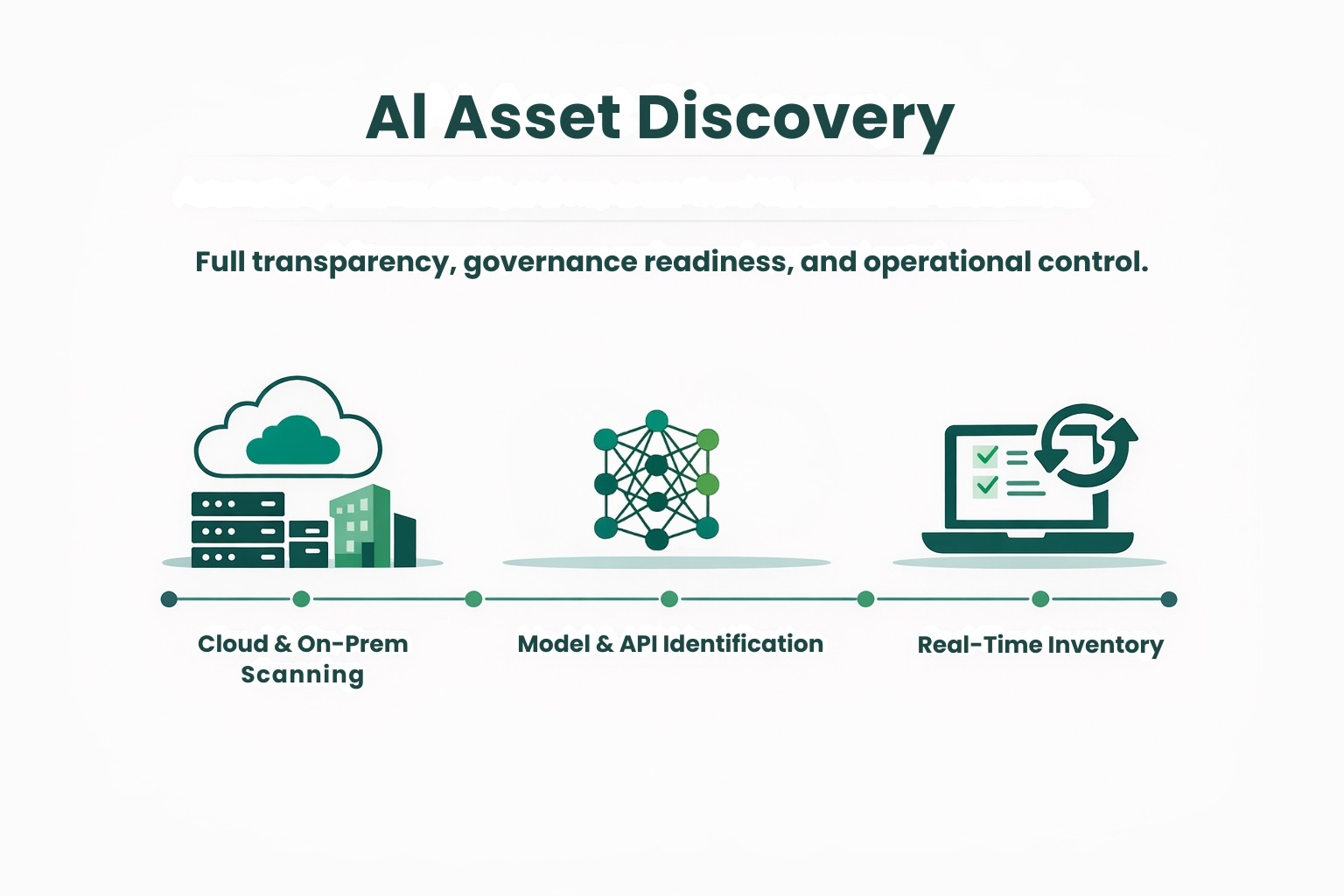

The Auto Discovery and Inventory Management module operates through three integrated technical layers that work together to provide continuous, accurate, and actionable AI visibility.

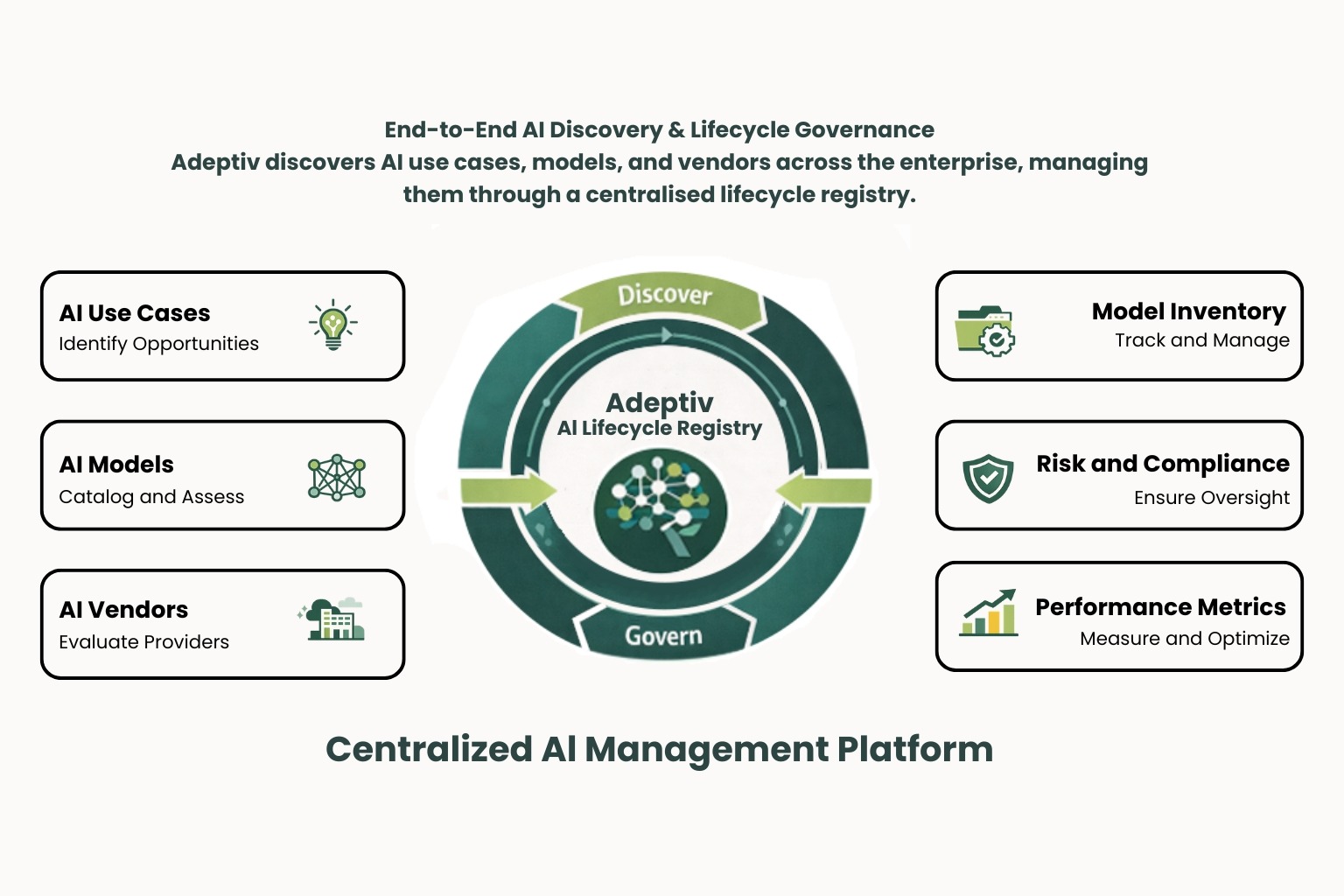

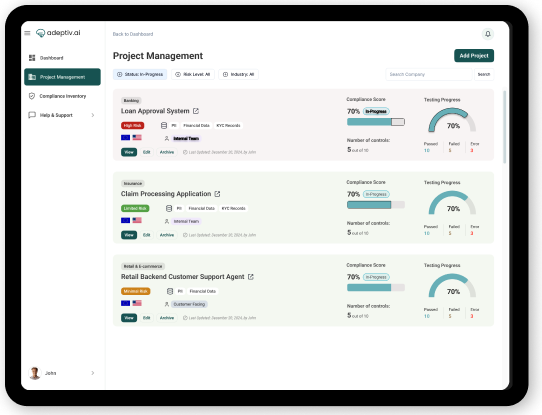

End-to-End AI Discovery & Lifecycle Governance

Adeptiv discovers AI use cases, models, and vendors across the enterprise, managing them through a centralized lifecycle registry.

Integrated Risk, Gap & Compliance Management

The platform evaluates vendor and model risks, identifies governance gaps, maps regulations to controls, and automates compliance workflows.

Continuous Monitoring, Control & Audit Readiness

Adeptiv connects inventory data to real-time monitoring, controls, and automated reporting, enabling risk evaluation and audit-ready dashboards.

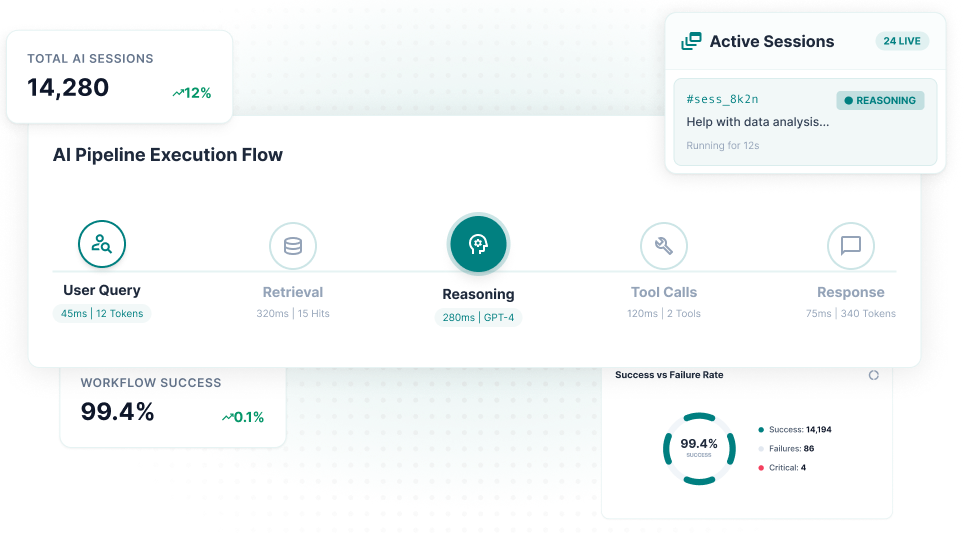

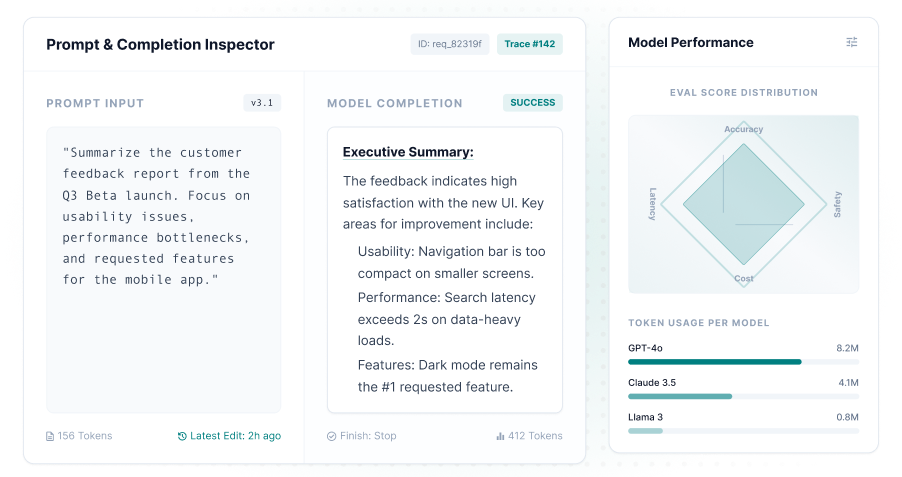

How the Adeptiv Observability SDK Works

Adeptiv AI’s observability framework is built on a telemetry-first architecture aligned with OpenTelemetry standards — the same foundation used by Arize, Langfuse, and Datadog LLM Observability.

Use Case Context · Jurisdiction · Risk Tier · Sector

Workflow Level

Traces the full end-to-end execution of an AI pipeline from initial user request through retrieval, reasoning, tool calls, and final response generation.

- Captures session context

- Latency across stages

- Total token consumption

- Aggregate evaluation scores

2000+ Controls Library · AI-Powered · Executable

Model Level

Instruments individual LLM calls within the pipeline capturing input prompt, model parameters, raw completion, finish reason, token counts, and per-call evaluation scores.

- Granular comparison in model versions

- Prompt variants and A/B testing

- Monitor model updates and revisions

Collection · Analysis · Validation · Traceability

Agent Level

Critical for governing agentic AI where execution paths are dynamic and non-deterministic.

- Observes autonomous agent behavior

- Tool selection decisions, external API calls

- Sub-agent orchestration, action outcomes

- Reasoning chain visibility

Governance-Grade Observability for Production AI

Shift Left on Production Risk

Pre-production testing catches only the risks you anticipated. Production telemetry surfaces risks you didn't — edge cases, adversarial users, and emergent failure modes.

Continuous Compliance Evidence

EU AI Act Article 72 post-market monitoring obligations, ISO 42001 Clause 9.1 performance evaluation, and NIST AI RMF MEASURE 2.5 all require ongoing evidence of model behavior.

Incident Response Velocity

Stanford AI Index (2025): 233 documented AI incidents, 56% increase year-on-year. Organizations with real-time observability detect incidents in minutes.

Trust at the Governance Layer

Risk dashboards and observability data give CROs, CISOs, and AI governance committees live operational intelligence — not quarterly reports built from sampling.

Measurable ROI & Business Impact

See real-time AI behavior in your production environment

Adeptiv AI’s observability framework is built on a telemetry-first architecture aligned with OpenTelemetry standards — the same foundation used by Arize, Langfuse, and Datadog LLM Observability.

frequently asked questions (FAQs)

What is AI Inventory Management?

- AI Inventory Management involves keeping track of all the AI systems, models, agents, data sources, and third-party tools in use throughout an organization. It’s about cataloging and maintaining an up-to-date record of everything related to AI.

Why is AI Inventory Management critical for AI governance?

- You can’t really govern, secure, or audit AI systems unless you're aware of their existence. Having a full inventory of AI tools gives you the essential visibility needed for assessing risks, ensuring compliance, maintaining accountability, and overseeing their entire lifecycle.

What types of AI systems should be included in an AI inventory?

- When it comes to putting together an AI inventory for your business, you’ll want to think about including a few key things. This should cover models you've built yourself, any third-party AI tools you’re using, SaaS applications that include AI, large language models, agents, APIs, plugins, and those AI features that are part of your business apps.

What is Shadow AI, and why is it risky?

- Shadow AI is all about those AI tools that employees or teams start using without any official approval or oversight. The problem is, these tools can lead to issues like data leaks, exposing intellectual property, violating compliance rules, and bringing regulatory risks into the mix.

How does AI Inventory Management support regulatory compliance?

- AI inventory management helps companies stay compliant with regulations like the EU AI Act, GDPR, and ISO 42001. These rules ask organizations to keep records of their AI systems, evaluate potential risks, and show how they’re monitored. Having an AI inventory in place makes it easier to track everything, assign ownership, and be ready for audits.