The Hidden Cost of Ignoring AI Governance in Europe

AI GOVERNANCE | EUROPE | ENTERPRISE STRATEGY | EU AI ACT

At a Glance

- AI governance in Europe is no longer optional—it’s becoming a market access requirement.

- The EU AI Act introduces strict financial, operational, and legal consequences for non-compliance.

- The real cost of ignoring AI governance goes beyond fines—it impacts strategy, trust, and scalability.

- Ungoverned AI creates decision inconsistency and hidden enterprise risk.

- Retrofitting governance is significantly more expensive than building it from the start.

- The next competitive advantage in Europe is not AI capability, but AI governability.

Most organisations treating AI governance as a compliance checkbox are asking the wrong question. The real question is not how much it costs to govern AI — it is how much it costs not to. And in Europe, that bill is already accruing interest.

Boards are approving AI investment at a record pace. Procurement teams are deploying models across customer decisions, risk assessments, and operational workflows. Yet in many of these same organisations, the governance infrastructure — the systems, processes, and accountability structures that make AI controllable — is either underdeveloped, fragmented, or simply absent.

This is not a technology gap. It is a strategic blind spot. And the compounding nature of ungoverned AI means that by the time the cost becomes visible, a significant portion of it is already irreversible.

Europe Is Playing a Different Game

The EU AI Act is not, at its core, a compliance regime. It is an infrastructure mandate — a structural requirement to make AI systems legible, auditable, and accountable within one of the world’s largest economic blocs. Understanding this distinction matters enormously for how enterprises approach their response.

Fully applicable since August 2024 with phased enforcement timelines extending through 2027, the Act introduces risk-tiered obligations across the AI lifecycle. High-risk applications in healthcare, financial services, critical infrastructure, and employment decisions carry the most demanding requirements: mandatory conformity assessments, human oversight mechanisms, technical documentation, and transparency disclosures.

What makes Europe genuinely different is the extraterritorial reach. The Act applies to any provider or deployer whose AI system outputs are used within the EU — regardless of where that system was built or where the company is headquartered. A US bank using an AI credit decisioning model that affects European customers falls within scope. A Canadian insurer deploying an AI underwriting tool in Germany falls within scope. The jurisdictional logic mirrors GDPR, and the precedent from GDPR enforcement suggests regulators will not be timid about applying it.

Europe is fast becoming the global benchmark for AI regulation. Jurisdictions from Brazil to Singapore are referencing the EU framework in their own legislative drafts. For enterprise AI governance, this is not a regional compliance matter — it is an emerging global standard. Organisations that get ahead of it gain a structural advantage. Those that delay are building technical debt into the foundation of their decision-making infrastructure.

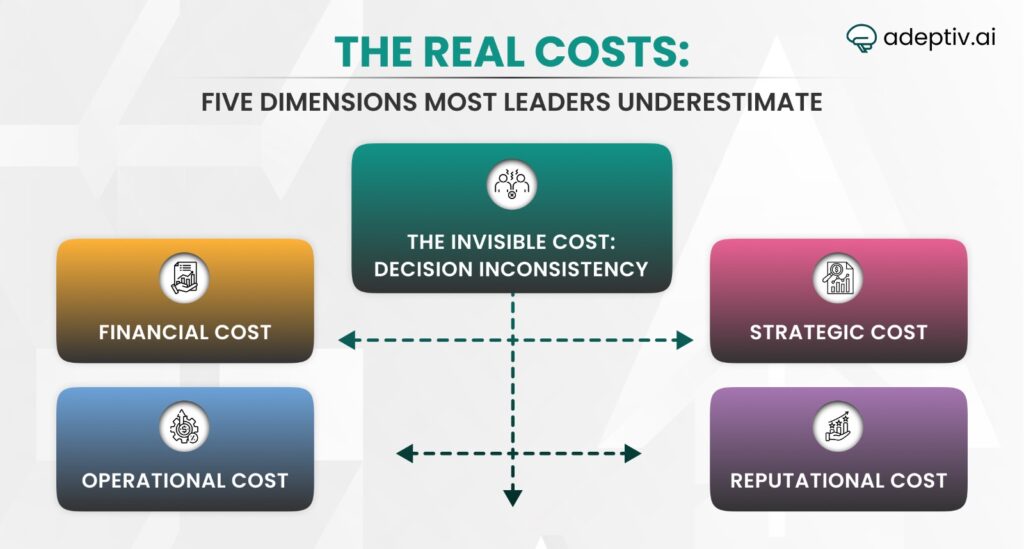

The Real Costs: Five Dimensions Most Leaders Underestimate

1. FINANCIAL COST

The penalty structure of the EU AI Act is deliberately designed to be impossible to ignore. Violations involving prohibited AI practices — such as social scoring systems or real-time biometric surveillance in public spaces — carry fines of up to €35 million or 7% of global annual turnover, whichever is higher. This surpasses even GDPR’s maximum penalty threshold of 4%.

Non-compliance with high-risk system obligations attracts fines of up to €15 million or 3% of global turnover. For a multinational with €10 billion in revenue, that third tier alone represents a €300 million exposure — before any civil litigation, remediation costs, or market withdrawal expenses are factored in.

But direct fines represent only the visible portion. Compliance remediation after the fact is structurally more expensive than governance built in from the start. Systems that were not designed with explainability, auditability, and data lineage in mind require significant rearchitecting — not patching. The cost of retrofitting governance onto a production AI system at scale routinely exceeds the cost of building it correctly the first time by multiples.

2. OPERATIONAL COST

Ungoverned AI creates operational drag in ways that are rarely measured or attributed correctly. When AI systems lack clear documentation, version control, and model risk frameworks, changes become expensive. Every regulatory inquiry, internal audit, or customer escalation triggers a manual discovery process — piecing together what model made which decision, on which data, under which conditions.

In highly regulated industries, this is not a theoretical concern. It is a lived operational reality. A major financial institution fielding a regulatory inquiry into its AI-driven lending decisions without adequate governance documentation faces weeks of costly investigation, potential system suspension, and the reputational exposure that accompanies both.

Operational fragmentation — where AI systems are deployed across business units without centralised governance — also creates significant model drift risk. Models trained on one distribution of data begin making systematically different decisions as conditions change, without anyone necessarily noticing until the consequences surface in outcomes data. This is not a failure of technology. It is a failure of governance infrastructure.

3. STRATEGIC COST

Market access in Europe increasingly depends on demonstrable AI governance. Procurement frameworks in financial services, healthcare, and the public sector are embedding AI conformity requirements into supplier contracts. A company that cannot demonstrate compliance with high-risk AI obligations — through documentation, audit trails, and human oversight mechanisms — will find itself excluded from significant portions of the enterprise market.

This strategic cost is compounding in a way that is easy to underestimate. Early-mover enterprises that establish rigorous governance frameworks are setting the commercial standard. Late movers will not merely face a compliance hurdle — they will face a competitive disadvantage in sales cycles, procurement evaluations, and partnership negotiations.

The US-EU divergence on AI governance also creates specific strategic risks for American companies operating in Europe. While the US continues to favour a sector-by-sector, voluntary approach, the EU has created binding obligations. American enterprises accustomed to governing AI at the discretion of internal risk committees will need to materially restructure their approach to operate in European markets — or accept the strategic cost of reduced access to them.

4. REPUTATIONAL COST

Trust, once lost, is recovered asymmetrically. IBM’s research suggests that 71% of CEOs believe establishing and maintaining customer trust has more impact on organisational success than any specific product or service. AI governance is one of the principal mechanisms through which trust in automated decisions is either established or eroded.

A single high-profile failure — an AI system that discriminates in credit decisions, misdiagnoses based on biased training data, or produces inconsistent outputs in a regulated process — can reframe how stakeholders perceive an organisation’s entire AI strategy. The reputational damage is not confined to the system in question. It cascades across investor confidence, talent attraction, and regulatory relationships simultaneously.

What makes this particularly dangerous is that the damage often precedes the detection. AI systems can produce systematically problematic outputs for extended periods before anyone identifies the pattern. By the time the harm is visible, the compounding has already occurred.

5. THE INVISIBLE COST: DECISION INCONSISTENCY

Perhaps the least discussed — and most consequential — cost of ungoverned AI is the loss of decision coherence across the enterprise. When multiple AI systems, trained on different data, governed by different teams, and optimised for different metrics, are making consequential decisions simultaneously, the organisation begins to behave in ways that no one has designed and no one fully controls.

“Most organisations scale AI faster than they can govern it. The gap between deployment and accountability is where risk accumulates silently.”

A retail bank might approve loans through one AI model while flagging similar customers as high risk through a separate fraud detection system. A healthcare provider might prioritise patient care pathways through an AI triage tool while an administrative AI simultaneously deprioritises the same patients for resource allocation. These inconsistencies are not edge cases — they are structural outcomes of uncoordinated AI deployment.

From what we are seeing across enterprises at Adeptiv AI, this is the governance gap that keeps senior risk officers awake at night: not a single catastrophic failure, but the slow accumulation of inconsistent, unauditable, and unaccountable AI decisions that collectively represent a systemic risk the organisation is not equipped to see, let alone manage.

The Enterprise Reality Gap

There is a persistent and widening gap between AI adoption and governance maturity in European enterprises. Organisations are deploying production AI at a speed that their risk and compliance functions are structurally not designed to match. This is not primarily a resource problem. It is an architecture problem.

Most enterprise governance frameworks were built for static systems — software that behaves consistently, can be fully documented at deployment, and changes only through deliberate version releases. AI systems are categorically different. They learn, drift, and interact with each other in ways that traditional governance mechanisms were not designed to capture.

The result is a growing inventory of ungoverned or partially governed AI deployments sitting inside regulated institutions — making consequential decisions daily, without the documentation, oversight, or accountability structures the EU AI Act will require of them.

A Scenario Worth Sitting With

Consider a mid-sized European bank that deployed an AI-assisted loan origination system eighteen months ago. The model was built by the data science team, approved through an internal risk review, and has been making credit recommendations ever since. It has performed well by the metrics the business tracks: faster decisions, lower default rates, and higher throughput.

What the bank has not done is build the governance infrastructure around it. There is no formal model risk register. The training data has not been audited for demographic bias. The model’s decision logic is not explainable in terms that a compliance officer could present to a regulator. There is no human oversight mechanism for edge cases. And the system has drifted — the economic conditions it was trained on look materially different from the ones it is operating in today.

Under the EU AI Act, this system almost certainly qualifies as high-risk. The bank is now facing a compliance rearchitecting project that will take months and cost significantly more than a governance-first approach would have required at the outset. More importantly, it cannot be certain that the decisions this model has made over the past eighteen months are legally defensible. That uncertainty is not just a legal liability. It is a strategic one.

Reframing the Question: Governance as Infrastructure

The most consequential shift available to enterprise leaders right now is a conceptual one. Governance is not compliance. Compliance is what you demonstrate to a regulator. Governance is the operational infrastructure that makes your AI systems trustworthy — to regulators, to customers, to your own board, and to the people making decisions based on their outputs.

This distinction matters because it changes where governance sits in the organisation and when it enters the AI development lifecycle. Compliance thinking treats governance as a final-stage gate — a set of boxes to check before deployment. Infrastructure thinking treats governance as foundational — the architecture upon which AI systems are built, tested, and operated.

The organisations building durable competitive advantage in AI are not those with the most capable models. They are those whose AI systems are the most governable — explainable on demand, auditable end-to-end, correctable in real time, and accountable to clear ownership structures. In a regulated market, governability is a product feature with material commercial value.

What CEOs Are Getting Wrong

Most CEOs are asking their teams the wrong questions about AI governance. They are asking: “Are we compliant?” when they should be asking: “Are we in control?”

Compliance is a point-in-time determination. Control is a continuous operational state. An organisation can be compliant on the day of an audit and fundamentally ungoverned in between. The EU AI Act, over time, will expose the difference — not through annual audits, but through incident-triggered investigations, regulator inquiries, and the kind of systemic failures that compound quietly until they cannot be ignored.

The questions CEOs should be asking their CIOs and CROs include: Do we have a complete inventory of AI systems making consequential decisions, and do we know their risk classification under the Act? For every high-risk AI system in operation, can we produce the technical documentation, human oversight mechanisms, and data governance evidence required by law — today, not in six months? And perhaps most critically: if an AI system causes harm tomorrow, do we have the governance infrastructure to understand what happened, demonstrate accountability, and prevent recurrence?

If the honest answer to any of these questions is uncertain, that uncertainty has a cost. It is just that the cost has not yet been invoiced.

The Cost Is Already Compounding

There is a tempting logic in waiting — waiting for enforcement to begin in earnest, waiting for a competitor to be made an example of, waiting for internal capacity to build. But AI governance is not a problem that responds well to delay. Every ungoverned system deployed today is tomorrow’s remediation project. Every unaudited decision made by an unaccountable model is a potential liability that lives in the organisation’s history indefinitely.

The organisations that will define the next phase of enterprise AI are not those that built the most AI, fastest. They are those that built AI that could be trusted — by their regulators, their customers, and their own leadership. In Europe, that trust is being codified into law. The timeline is not theoretical. The obligations are not optional. And the cost of being unready is no longer hypothetical.

“For organisations operating in Europe, the next competitive advantage will not be AI capability — but governability.“

The question is no longer whether governance is required. It is how quickly it can be operationalised — and whether your organisation is building that capability now, or waiting until the cost of not having it becomes impossible to ignore.

FAQs

1. What is AI governance in Europe?

AI governance in Europe refers to the frameworks, processes, and controls required to ensure AI systems are transparent, auditable, compliant, and accountable, especially under regulations like the EU AI Act.

2. What are the penalties for non-compliance with the EU AI Act?

Organizations can face fines of up to €35 million or 7% of global annual turnover, depending on the severity of the violation.

3. Why is AI governance important for enterprises?

AI governance ensures risk mitigation, regulatory compliance, decision accountability, and trust, which are critical for operating in regulated markets like Europe.

4. What is the biggest hidden risk of ignoring AI governance?

The biggest hidden risk is decision inconsistency—where multiple AI systems make conflicting or unexplainable decisions, creating systemic enterprise risk.

5. Can companies outside Europe be affected by the EU AI Act?

Yes. The EU AI Act has extraterritorial scope, meaning any company whose AI systems impact EU users must comply.

6. Is it more expensive to implement AI governance later?

Yes. Retrofitting governance into existing AI systems is significantly more costly and complex than building it into the system from the beginning.