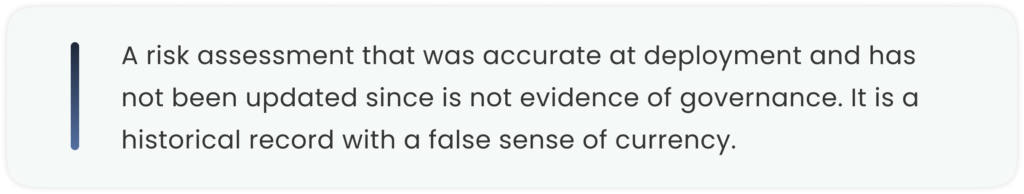

Most enterprises treat AI risk assessment as a compliance exercise. They conduct it once, file the output, and move on. The models that will cause the most serious operational damage in the next two years are overwhelmingly the ones that passed their initial risk assessments without issue.

At a Glance

- AI Risk Assessment is no longer a one-time compliance task—it must function as a continuous governance layer across the full AI lifecycle.

- Static assessments performed only at deployment fail to capture model drift, changing data patterns, and real-world operational risks.

- The EU AI Act requires continuous risk management, human oversight, post-market monitoring, and audit-ready documentation for high-risk AI systems by August 2026.

- Third-party vendor AI creates major governance blind spots, as deployer obligations still apply even when AI systems are externally sourced.

- Effective AI Risk Assessment must combine technical, operational, legal, and compliance oversight—not just model validation.

- Enterprises that build real-time monitoring, logging, and governance infrastructure will be far better positioned for regulatory scrutiny and business resilience.

Consider the pattern. A healthcare organisation deploys an AI model to assist clinicians in prioritising patient referrals. The model is assessed at deployment: its training data is reviewed, its outputs are tested against known cases, a risk rating is assigned. Everything passes. The model goes live, and the governance conversation moves to the next initiative.

Fourteen months later, the patient population served by this organisation has shifted. A significant proportion of referrals now come from a demographic that was underrepresented in the original training dataset. The model’s performance on this population is measurably worse than its overall performance metrics suggest. Nobody has noticed, because the monitoring framework that would detect this kind of distributional shift was not built into the deployment. The initial risk assessment was accurate at the time it was conducted. It is no longer accurate now. And the organisation has no mechanism to know that.

This is not an edge case. It is the natural consequence of treating risk assessment as an event rather than a process. And the EU AI Act, which comes into full force for high-risk AI systems in August 2026, is designed specifically to close this gap — requiring continuous risk management rather than point-in-time review for any AI system that materially affects human outcomes in regulated domains.

The Static Assessment Problem

The dominant model of AI risk assessment in enterprise organisations is static. A team conducts a review at the point of deployment, using a framework — the NIST AI Risk Management Framework, ISO 42001, an internal methodology — to evaluate the system against a defined set of criteria. The output is a risk rating and a set of mitigations. The assessment is documented. A governance committee signs off. The model enters production.

This approach was developed when AI systems were less dynamic, deployed in narrower contexts, and updated less frequently. It made reasonable sense when the primary governance question was “is this system safe to deploy?” It does not address the question that now matters most: “is this system safe right now, given what it is actually doing in production?”

AI models change. They are retrained on new data. They encounter inputs that differ from those in their training set. Their operating context shifts as the populations they serve change, as the business processes they support evolve, and as they interact with other systems that are themselves changing. A risk assessment that does not update with the model is not capturing the current risk profile of the system. It is capturing a snapshot of what the system was, not what it has become.

Why Most Risk Frameworks Fail in Practice

The most widely used AI risk frameworks — NIST AI RMF, ISO/IEC 42001, and the EU AI Act’s own requirements — are sound conceptual structures. They identify the right dimensions of risk: safety, fairness, transparency, accountability, robustness. They provide governance vocabulary and a logical sequence for thinking about risk across the AI lifecycle. They are genuinely useful as organising principles.

They fail in practice for a reason that has nothing to do with their content. They fail because most organisations implement them as documentation exercises rather than as operational disciplines. The framework produces a document. The document satisfies a governance requirement. The governance requirement is met. The model continues running. The framework has done its job, at least in the narrow sense that a box has been checked. It has not done the job that matters, which is ensuring that the actual behaviour of an actual AI system, in actual production conditions, remains within acceptable risk parameters over time.

The European Data Protection Supervisor’s 2025 guidance on risk management of AI systems is explicit on this point: risk management must span the entire lifecycle of an AI system, and logging and record-keeping mechanisms must be embedded into AI systems so that key events, system updates, performance metrics, and anomalies are automatically captured. This is not a description of a periodic review. It is a description of infrastructure.

The Third-Party Vendor Risk Gap

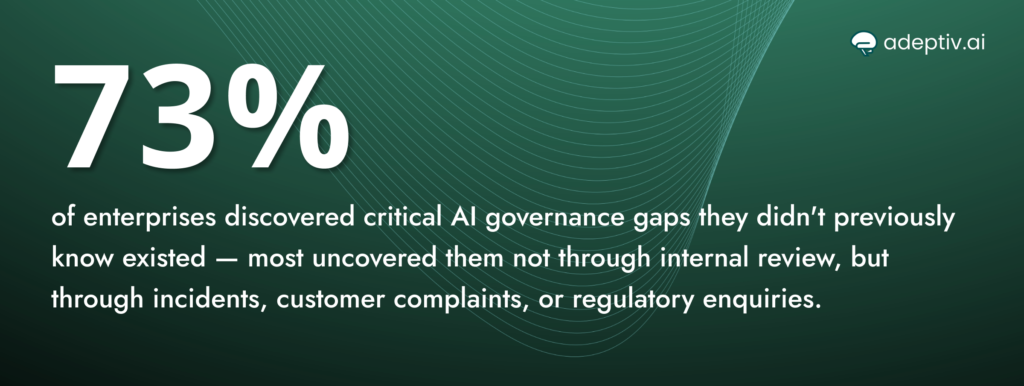

There is a dimension of AI risk assessment that enterprise governance frameworks almost universally underaddress: the AI that enters organisations through third parties. A financial institution’s risk assessment process may be rigorous for the models its data science team builds internally. It is rarely comparably rigorous for the AI capabilities embedded in the vendor software it uses for fraud detection, customer service, portfolio management, and operational efficiency.

This is a structural blind spot. The EU AI Act’s obligations as a deployer — the organisation using an AI system — are not suspended because the system was built by a third party. A deployer of a high-risk AI system is required to ensure that the system was built to compliance standards, to implement appropriate human oversight, to maintain post-market monitoring, and to cooperate with regulatory investigations. These obligations apply whether the system was built in-house or procured from a vendor.

Most organisations have not mapped their third-party AI landscape with anything approaching the rigor this requires. AI enters through vendor tools, SaaS platform features, API integrations, and embedded models in software that procurement teams acquired before AI governance was a serious organisational priority. Conducting a meaningful risk assessment for a system you have not inventoried is not possible. And conducting a meaningful assessment for a system whose technical documentation is held by a vendor who may or may not be willing or able to provide it is harder still.

Risk Is Not Primarily a Technical Problem

The framing of AI risk as a technical challenge — a problem of model accuracy, bias metrics, and performance benchmarks — is accurate but incomplete. The risks that cause the most serious operational damage are rarely purely technical. They are systemic: they arise from the interaction of technical systems with organisational processes, human decision-making, regulatory environments, and customer expectations.

A model that is technically sound but deployed in a process where human overseers lack the authority or capacity to intervene when it produces problematic outputs is an operational risk, not a technical one. A model whose outputs are technically accurate but presented to end users in ways that encourage over-reliance creates a liability that no amount of model validation can eliminate. A model that performs correctly on the metrics it was evaluated against but optimises for outcomes that have unintended consequences for specific customer segments creates reputational and regulatory risk that does not appear in any technical assessment.

Effective AI risk assessment requires governance that operates at the intersection of the technical, the operational, and the legal. It requires risk functions and data science teams to share a common vocabulary and a common understanding of what “risk” means in the context of a specific AI system deployed in a specific business process. It requires legal and compliance teams to understand the technical architecture of the systems they are governing well enough to evaluate whether oversight mechanisms are real or nominal. And it requires executive leadership to understand that AI risk is not a technology department problem — it is a business problem with technology at its centre.

What Continuous Risk Management Actually Looks Like

Moving from periodic assessment to continuous risk management is not primarily a question of conducting more frequent reviews. It is a question of building monitoring into the operational infrastructure of AI systems so that relevant risk signals are captured automatically and surfaced to the right decision-makers without requiring a formal governance exercise to generate them.

This means instrumenting AI systems to log not just performance metrics but fairness metrics, anomaly indicators, and decision patterns that might signal drift from intended behaviour. It means establishing alert thresholds that trigger review when those metrics move beyond defined bounds, rather than waiting for a quarterly governance cycle to catch what might have happened months earlier. It means maintaining technical documentation as a living record of system changes rather than a historical document frozen at deployment.

It also means having humans genuinely embedded in oversight — not as rubber stamps on AI outputs, but as informed participants with real authority to intervene. The EU AI Act’s requirement for human oversight in high-risk systems is not satisfied by a policy that says “humans can review AI decisions.” It is satisfied by demonstrable evidence that humans do review AI decisions, in ways that affect outcomes, with appropriate training and authority.

From our experience working with regulated organisations building this kind of continuous governance infrastructure, the most consistent finding is that it changes the conversation. When risk signals are visible in real time, governance stops being a retrospective compliance exercise and starts being a management discipline. Decisions about model retraining, deployment contexts, and oversight capacity are made with current information rather than historical approximations.

The organisations that will navigate the enforcement environment of the next two years most effectively are not those with the most comprehensive initial risk assessments. They are those whose governance infrastructure makes the current state of their AI systems continuously visible — to their own leadership, and to regulators who ask to see it.

FAQs

1. What is AI Risk Assessment in enterprise governance?

AI Risk Assessment is the process of identifying, monitoring, and managing risks associated with AI systems across their full lifecycle. It includes fairness, bias, safety, transparency, compliance, vendor risk, and operational oversight.

2. Why is a one-time AI Risk Assessment no longer enough?

Because AI systems change after deployment through retraining, data drift, new user behaviour, and changing business contexts. A static assessment only reflects the system at one point in time, not its current operational reality.

3. How does the EU AI Act impact AI Risk Assessment?

The EU AI Act requires continuous risk management for high-risk AI systems, including human oversight, logging, technical documentation, post-market monitoring, and audit readiness—not just initial deployment approval.

4. Why is third-party vendor AI a major governance risk?

Organizations remain responsible as deployers even when AI is purchased from vendors. If third-party AI systems are not properly inventoried, monitored, and documented, compliance and operational risks remain with the enterprise.

5. What does continuous AI Risk Management look like in practice?

It includes live monitoring of model performance, fairness tracking, anomaly detection, audit trails, documentation updates, human intervention mechanisms, and alert systems that trigger governance reviews automatically.

6. How can enterprises improve AI Risk Assessment readiness?

By building governance infrastructure such as AI inventories, real-time monitoring, policy enforcement, technical documentation, vendor oversight frameworks, and audit-ready evidence that proves compliance continuously.