Dynamic AI Risk Assessment & Risk Generation

At Adeptiv AI, we help organizations navigate the complexities of AI risk with science-driven, comprehensive AI Risk Assessment solutions.

Why Conventional AI Risk Management Fails at Scale

Traditional risk management — built for software systems, financial instruments, and operational processes — was not designed for AI. AI introduces a fundamentally different risk profile: probabilistic outputs, opaque reasoning, emergent behavior, continuous drift, and context-dependent failure modes that static checklists and periodic audits cannot capture. The result is a growing gap between AI deployment velocity and the organization’s ability to understand, quantify, and govern the risk it is accumulating.

Fragmented Risk Visibility

AI risks are scattered across spreadsheets, emails, and siloed teams, preventing centralized oversight and consistent enterprise-wide tracking.

No Standardized Scoring

Organizations lack a consistent methodology to quantify, compare, and prioritize AI-specific risks effectively.

Regulatory Traceability Gaps

Linking identified risks to regulatory requirements during audits is manual, time-consuming, and often incomplete.

Unclear Ownership & Accountability

Mitigation efforts frequently lack defined ownership, visibility, and structured follow-through across stakeholders.

Evolving Risk Posture

AI risk profiles shift over time, but historical tracking and trend analysis are rarely maintained.

Limited Production Monitoring

AI systems in production lack continuous oversight for drift, bias, and performance degradation, allowing emerging risks to go undetected until impact occurs.

EU Al Act Risk Classification Framework - What's at Stake

UNACCEPTABLE

Prohibited outright. Cognitive manipulation, social scoring, real-time biometric surveillance.

HIGH RISK

Mandatory conformity assessment, EU database registration, human oversight, audit logging.

LIMITED RISK

Transparency obligations. Users must be informed they are interacting with an AI system.

MINIMAL RISK

No specific obligations. Voluntary codes of conduct encouraged for best practice.

The Governance Imperative

EU AI Act high-risk obligations become fully enforceable August 2, 2026. ISO/IEC 42001 requires documented risk assessment processes. NIST AI RMF MAP and MEASURE functions mandate contextual risk identification and quantification. Organizations without automated, context-aware risk assessment infrastructure face both regulatory exposure and the operational impossibility of scaling manual review processes.

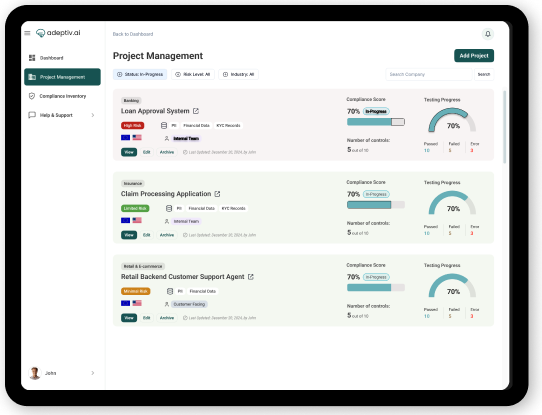

How We Fixed This Problem

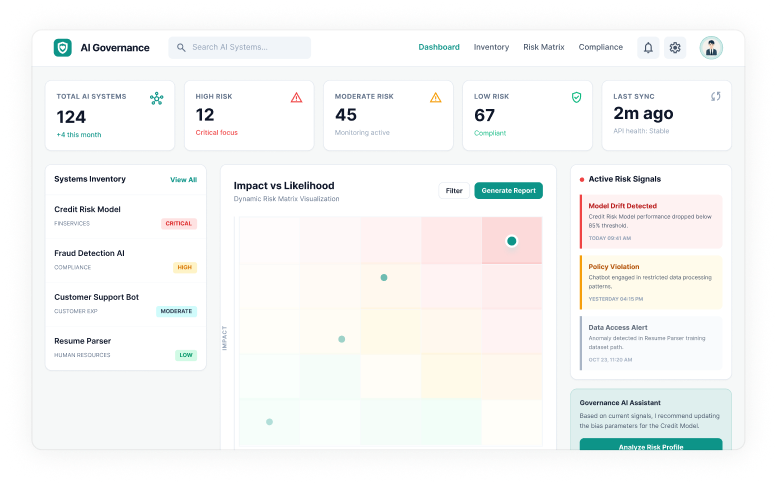

Our platform empowers you to systematically evaluate the risks posed by your AI systems across ethical, regulatory, security, and operational dimensions enabling responsible and compliant AI adoption at scale.

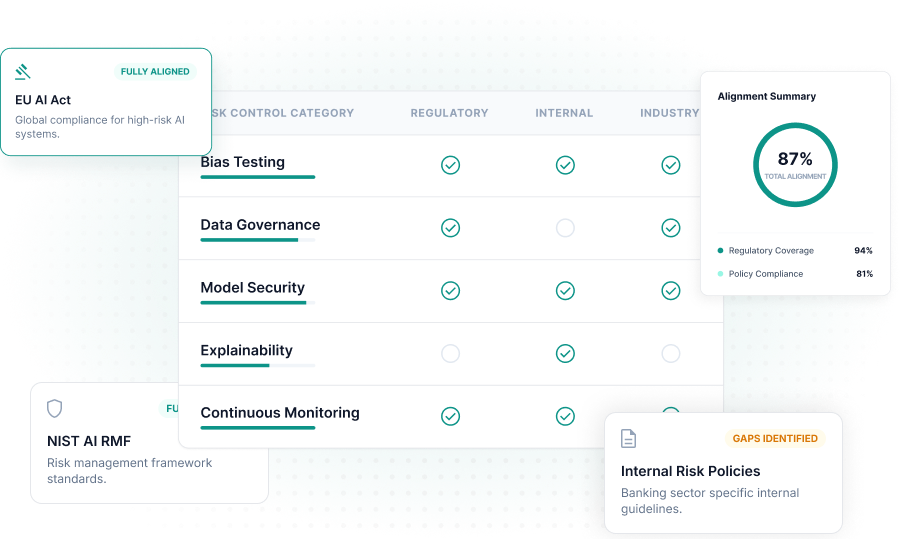

Aligned to global AI standards

Comprehensive Risk Frameworks

Evaluate AI systems against leading global standards.

- Map controls to regulatory requirements

- Align with internal risk policies

- Benchmark against industry best practices

Intelligent classification of AI systems

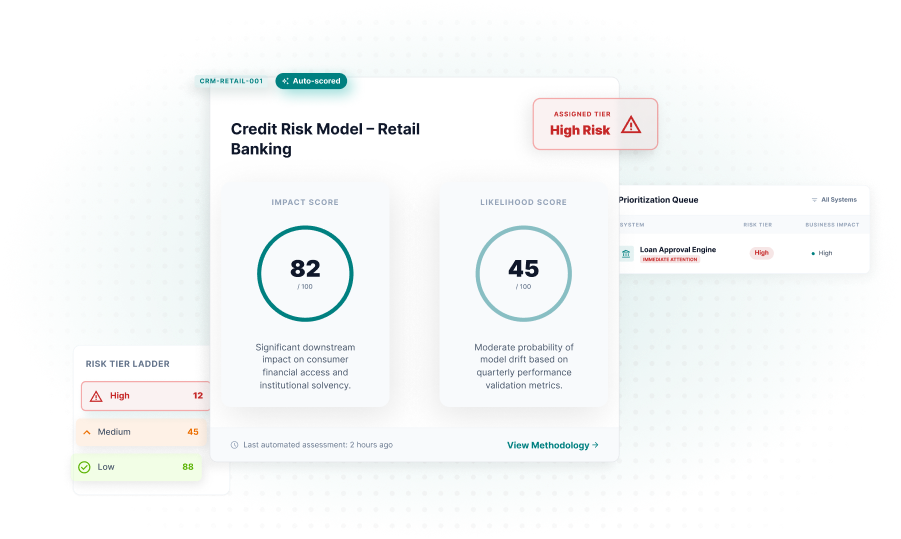

Automated Risk Scoring

Score and tier AI systems automatically.

- Calculate impact and likelihood scores

- Classify systems by risk tier

- Prioritize remediation by business criticality

Continuous oversight as systems evolve

Dynamic Risk Monitoring

Monitor AI risk posture in real time.

- Track drift and performance degradation

- Trigger alerts on policy violations

- Reassess risk after system updates

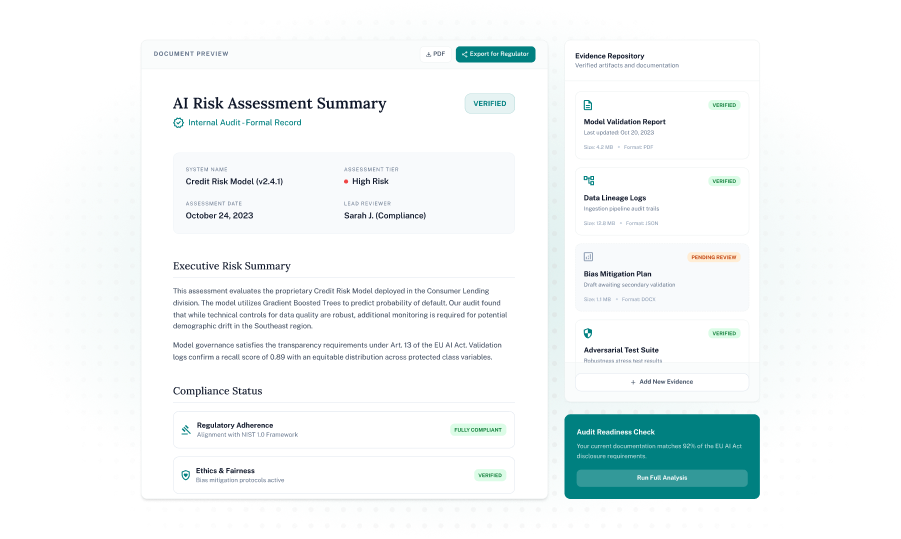

Transparent documentation for regulators

Audit-Ready Reporting

Generate clear, regulator-ready risk reports.

- Produce comprehensive risk summaries

- Maintain evidence for compliance reviews

- Demonstrate responsible AI governance

Measurable ROI & Business Impact

Why AI Risk Assessment Is Essential

As AI models and systems become more embedded in critical business functions, managing their risks is vital to safeguard trust, comply with regulations, and prevent unintended consequences. Proactive risk assessment enables:

frequently asked questions (FAQs)

What is AI Inventory Management?

- AI Inventory Management involves keeping track of all the AI systems, models, agents, data sources, and third-party tools in use throughout an organization. It’s about cataloging and maintaining an up-to-date record of everything related to AI.

Why is AI Inventory Management critical for AI governance?

- You can’t really govern, secure, or audit AI systems unless you're aware of their existence. Having a full inventory of AI tools gives you the essential visibility needed for assessing risks, ensuring compliance, maintaining accountability, and overseeing their entire lifecycle.

What types of AI systems should be included in an AI inventory?

- When it comes to putting together an AI inventory for your business, you’ll want to think about including a few key things. This should cover models you've built yourself, any third-party AI tools you’re using, SaaS applications that include AI, large language models, agents, APIs, plugins, and those AI features that are part of your business apps.

What is Shadow AI, and why is it risky?

- Shadow AI is all about those AI tools that employees or teams start using without any official approval or oversight. The problem is, these tools can lead to issues like data leaks, exposing intellectual property, violating compliance rules, and bringing regulatory risks into the mix.

How does AI Inventory Management support regulatory compliance?

- AI inventory management helps companies stay compliant with regulations like the EU AI Act, GDPR, and ISO 42001. These rules ask organizations to keep records of their AI systems, evaluate potential risks, and show how they’re monitored. Having an AI inventory in place makes it easier to track everything, assign ownership, and be ready for audits.